Disclaimer: Views in this blog do not promote, and are not directly connected to any L&G product or service. Views are from a range of L&G investment professionals, may be specific to an author’s particular investment region or desk, and do not necessarily reflect the views of L&G. For investment professionals only.

Engines of intelligence: Scaling the frontier

AI models continue to improve, leading to disagreements about their effects on the labour market.

For millennia, people thought only certain types of matter could move as living things could. While humans could draw images or imagine stars arranged in ways that looked like, say, a bear, it was thought impossible to create ‘animate’ entities from ‘inanimate’ matter. Nowadays, how bears move is understood as electrochemical process, not some physical property. Creating new organisms, far from impossible, is called synthetic biology.

Our understanding of intelligence is as hazy as ancient concepts of motion. Some doubted it could be created artificially. But AI’s recent improvements reopen this debate. The pattern recognition, learning, and discovery that AI models are capable of is not explicitly specified in programming, rather it’s an emergent property of the program itself.

Despite disagreements around measuring success, how far these properties might generalise, and what their ultimate limits are, remains AI’s central question,

The scores, they go up

AI’s progress has been remarkable in its depth and breadth. ChatGPT, which initially responded only in text and occasionally gave nonsensical answers, now encompasses many modalities and can draft code or legal documents easily. Moreover, the cost of producing a unit of output from models (a token) has collapsed, falling faster even than computer memory did in the 1990s.

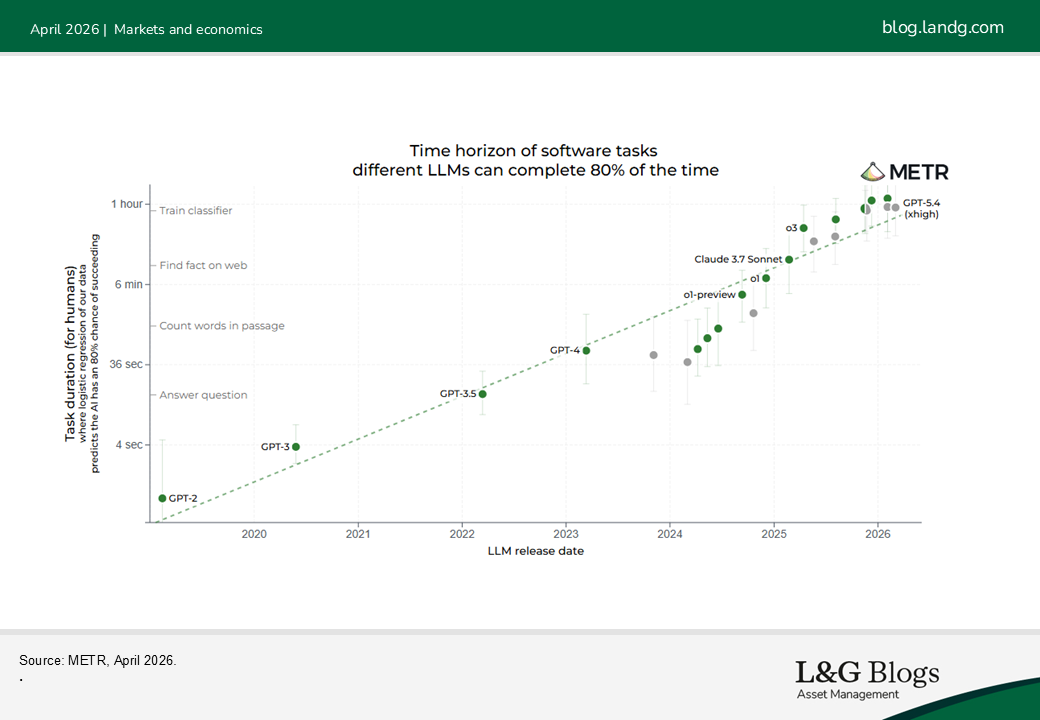

As cost falls, new capabilities are being tested. Speculation is strong around agentic systems that can act on the users’ behalf. In May, Gemini, Google’s* AI model, became the first non-human to complete a game of Pokémon, with OpenAI’s GPT-5 achieving the same result in a faster time months later. METR, an AI-research body, found the task-length achievable by an AI program is doubling every 215 days, with error rates halving every four months.

AI: The ‘jagged’ frontier

Although progress has been strong, it is not uniform. This puzzle at the center of AI performance is the ‘jagged frontier’. This describes how AI models can tackle hard problems, like designing code or predicting the weather, yet struggle with more simple questions. The question ‘how many ‘r’s are in the word ‘strawberry’?’ became famous for how hard AIs found it. While models later overcame this error, the stickiness of these imperfections make gauging economy-wide impacts challenging.

Have we seen this movie before?

Nevertheless, strong performance of models in areas like medical diagnosis and software engineering has ignited concerns about human displacement. We touched on these concerns before, but opinions have hardened since then into two distinct camps.

The first camp argues AI is a ‘benign’ technology like PCs and electricity, which both caused unemployment anxieties. They say productivity gains associated with AI are typical, and that claims of immediate human displacement even in AI-dominated fields haven’t happened. Moreover, they argue technologies take time to implement, which prevents sudden stops in labour demand.

The second camp worries AI will bring a disruptive labour market shock. They emphasise AI’s rapid pace of improvement and its general domain of capabilities. They fret that AI improvements will continue indefinitely, boosting GDP but eroding labour’s share of income. In this world, they claim, large volumes of high-paying work will become AI run, with the roles where humans dominate getting ever-smaller.

We see sense on both sides. AI, even if it’s performative, likely won’t be implemented immediately. The work most exposed to AI is also overrepresented in the public imagination. Lawyers and software engineers comprise only 2% of the US labour force, so wholesale replacement there (if it happened) needn’t be calamitous. Economic theory finds productivity-enhancing technology beneficial for employment and wages. However, the limits of AI performance are highly uncertain, and there’s a risk the future doesn’t conform to current macroeconomic theory.

Considering this, we remain watchful for evidence AI is hitting labor markets, and for AI systems becoming rivalrous with humans. One lesson we draw from the animate-inanimate matter misconception is that all theories, no matter how august or long-lasting, can always be improved upon.

This is the third in a series of blog posts covering the buildout of generative AI. The previous instalments covered the infrastructure buildout and the geopolitical implications of AI. The last installment will be a comparison of the current equity market mood with the exuberance of the late-1990s.

*For illustrative purposes only. Reference to a particular security is on a historic basis and does not mean that the security is currently held or will be held within an L&G portfolio. The above information does not constitute a recommendation to buy or sell any security.

Recommended content for you

Learn more about our business

We are one of the world's largest asset managers, with capabilities across asset classes to meet our clients' objectives and a longstanding commitment to responsible investing.